An article from the Chronicle of Higher Education popped up which once again highlighted the information (or lack of) needs of college students. It has been a recent phenomenon -- this argument and counter-arguments of the necessity of libraries and librarians in the face of Google-ization. For every viewpoint that the Internet has replaced the information services of libraries, there is the stance that users' are even more confused about information overload and the mess that is the Web.

An article from the Chronicle of Higher Education popped up which once again highlighted the information (or lack of) needs of college students. It has been a recent phenomenon -- this argument and counter-arguments of the necessity of libraries and librarians in the face of Google-ization. For every viewpoint that the Internet has replaced the information services of libraries, there is the stance that users' are even more confused about information overload and the mess that is the Web.I tend to agree with a what Dennis Dillon says in a new article, Google, Libraries, and Knowledge Management: From the Navajo to the National Security Agency. Libraries and the 'Net play are different entities: libraries play the library game, not the information game. Google is the same for everyone. It is not tailored for different user groups, and it does not change, as local users need shift. Google's very nature is different from that of libraries.

Here's the kicker folks: We could wake up tomorrow to the news that a banking conglomerate has purchased Google and intends to turn it into a private corporate information tool, and wants to convert the content to French. Although just a silly hypothetical situation, Dillon makes a good point that the nature of people and organizations such as Google are not playing the same games as libraries.

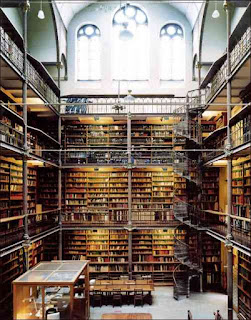

Perhaps this is what libraries with foresight such as McMaster University Libraries are doing. They're integrating new technologies to supplement and complement existing facilities. Before it's too late. I personally talk a great deal about emergent technologies, particularly Web 3.0 and the Semantic Web, but in the end, I believe that these are mere tools that facilitate for the growing organism of libraries. In the end, interior design is as every bit relevant to how users perceive the physical spaces of the library as Facebook's uses for increasing outreach to students. But put the two together: and we pack a powerful punch. Dillon leaves us with a freshly yet somewhat disconcerting commenting:

Libraries have become so enamoured of technology that we sometimes cannot see what is in front of our faces, which is that there are still people in our buildings and they are there for a reason.